Ciena is advancing a new wave of optical innovations designed to meet rising capacity demands. In this conversation, Ciena’s Helen Xenos sits down with SVP of Global R&D Dino DiPerna to explore the products, technologies, and architectural changes shaping the next generation of connectivity.

This week, we previewed several innovations Ciena is developing to help redefine the future of optical networking as AI-driven workloads continue to accelerate capacity demands.

To provide more context behind these announcements—and what they mean for the future of networking—I had the opportunity to sit down with Dino DiPerna, Ciena’s Senior Vice President of Global Research & Development. Having worked closely with Dino for many years, I always learn something new from our conversations.

In this discussion, we explore how AI is reshaping network architectures and the innovations required to support the next generation of connectivity.

Helen Xenos: AI is reshaping network demands at an unprecedented pace. What fundamentally changed in the past year that makes this moment different?

Dino DiPerna: For me, the biggest shift is that training is no longer confined to a single data center facility.

As machine learning models grow and ingest more data, demand for capacity across the WAN has already been increasing. But what’s really changed over the past 12 to 18 months is that space and power constraints are forcing AI training clusters to expand beyond a single facility.

When that happens, the network becomes the critical fabric connecting distributed training. That shift alone is driving one or two orders of magnitude more network capacity.

Our cloud provider customers understand that to fully capitalize on their compute investments, the network has to scale alongside it. Otherwise, the network becomes the bottleneck that limits the value of that AI infrastructure. That shift in demand is also changing how AI networks themselves are being designed.

How is AI traffic different from traditional cloud traffic, and why does that require a rethink of network architecture?

One of the biggest architectural shifts we’re seeing is what many refer to as scale across. For the first time, organizations are building dedicated networks for AI back-end infrastructure. These environments require enormous cross-sections of capacity to support distributed AI training, which means the network has to be designed very differently from traditional cloud traffic patterns.

We’re working very closely with hyperscalers to optimize architectures that can deliver the capacity, efficiency, and reliability they need.

At the same time, the pace of deployment is extraordinary. AI infrastructure only delivers value once the connectivity is in place, so these networks are being built and turned up at a very rapid pace. That’s forcing innovation not just in the products themselves, but across the entire deployment process—from automated service activation and advanced network telemetry to solution validation and staging—to help customers accelerate rollout and get these networks operational as quickly as possible.

As AI clusters expand beyond a single data center, the physical transport infrastructure supporting those networks must evolve as well.

This is where hyper-rail photonics come in. What challenges are hyperscalers and network operators facing today that led to this innovation?

As these scale-across networks extend beyond 100-150 kilometers, optical line amplification becomes essential.

The challenge is that the facilities supporting those networks—traditional line amplifier huts—have very real space and power limitations. At the same time, wholesale providers and hyperscalers are deploying links that can involve hundreds of fiber pairs across long distances. In many cases, operators can only light a fraction of those fibers because the hut infrastructure simply doesn’t have the space or power to support them.

That’s where density and energy efficiency become even more critical. Quite frankly, while EDFA technology has been the workhorse of optical networks for more than 30 years, at its core, the technology has barely changed. As networks scale to support AI workloads, this clearly calls for significant innovation.

With hyper-rail, we’ve rethought line amplifier design from the ground up—across both hardware and software—to dramatically improve space and power efficiency. For example, with RLS Hyper-Rail, we can achieve up to 32 times the density per rack compared to traditional approaches.

This is really just the beginning. As AI infrastructure continues to expand, we’ll need to keep innovating in this part of the network to support the scale and efficiency that our customers will require going forward. Of course, scaling AI networks isn’t only about the photonic line infrastructure—it also requires continued innovation in coherent optics.

I know our teams continue to drive industry-leading innovation in coherent optics. It’s been 18 months since we started shipping WaveLogic 6 Extreme—still the industry’s only coherent 1.6 Tb/s solution—and development is now well underway for 1.6 Tb/s coherent pluggables.

What are the key challenges in developing 1600ZR/ZR+ pluggables, and why was investing in 2nm CMOS the right strategic move?

The biggest challenge with 1.6T coherent pluggables is achieving the very high baud and associated analog bandwidth while staying within the strict power envelope of a pluggable form factor.

This is where leading-edge CMOS continues to be critical. As you know, we’ve consistently invested in the most advanced semiconductor nodes because they enable us to achieve both higher performance and better power efficiency designs. We were the first with 7nm coherent DSP, first with 3nm DSP, and once again, we will fully leverage the latest 2nm CMOS technology for the next generation of DSPs.

Moving to 2nm provides the raw analog performance and power efficiency that enables us to design and deliver 1600ZR/ZR+ pluggables at scale.

How do full-spectrum coherent solutions simplify scaling AI infrastructure? What challenges do these solve?

When pluggable optics first emerged, the goal was to eliminate inefficient back-to-back grey optics and reduce cost and power. But operationally, networks still scaled by lighting individual wavelengths over an extended period of time.

With AI scale-across architectures, that model starts to break down. Operators are often lighting entire fibers—or even multiple fibers—from day one. When you need that much capacity immediately, lighting up individual wavelengths at a time becomes inefficient and adds unnecessary cost and operational complexity.

Full-spectrum transponders take a different approach. Instead of deploying capacity one wavelength at a time, you can light the full spectrum on a fiber pair in a single system. That simplifies installation, reduces hardware overhead, and makes it much more efficient to deploy large chunks of capacity quickly.

As networks scale to support AI infrastructure, this kind of efficiency becomes increasingly important.

What strategic role does co-packaged or near-package optics play in the future of AI networking, and how does Ciena’s Vesta platform accelerate that vision?

Co-packaged and near-package optics are really about improving efficiency across the entire AI networking stack. As switching capacity inside AI clusters continues to grow, the traditional electrical interconnects between switches and optical modules become increasingly constrained by power and signal integrity.

By bringing optics much closer to the XPU and switching devices, you can significantly improve power efficiency and density in order to continue scaling the overall system capacity.

This is where solutions like Vesta come in. Vesta is designed as a high-capacity optical interconnect engine that enables more efficient connectivity between XPU and switching infrastructure and the optical network.

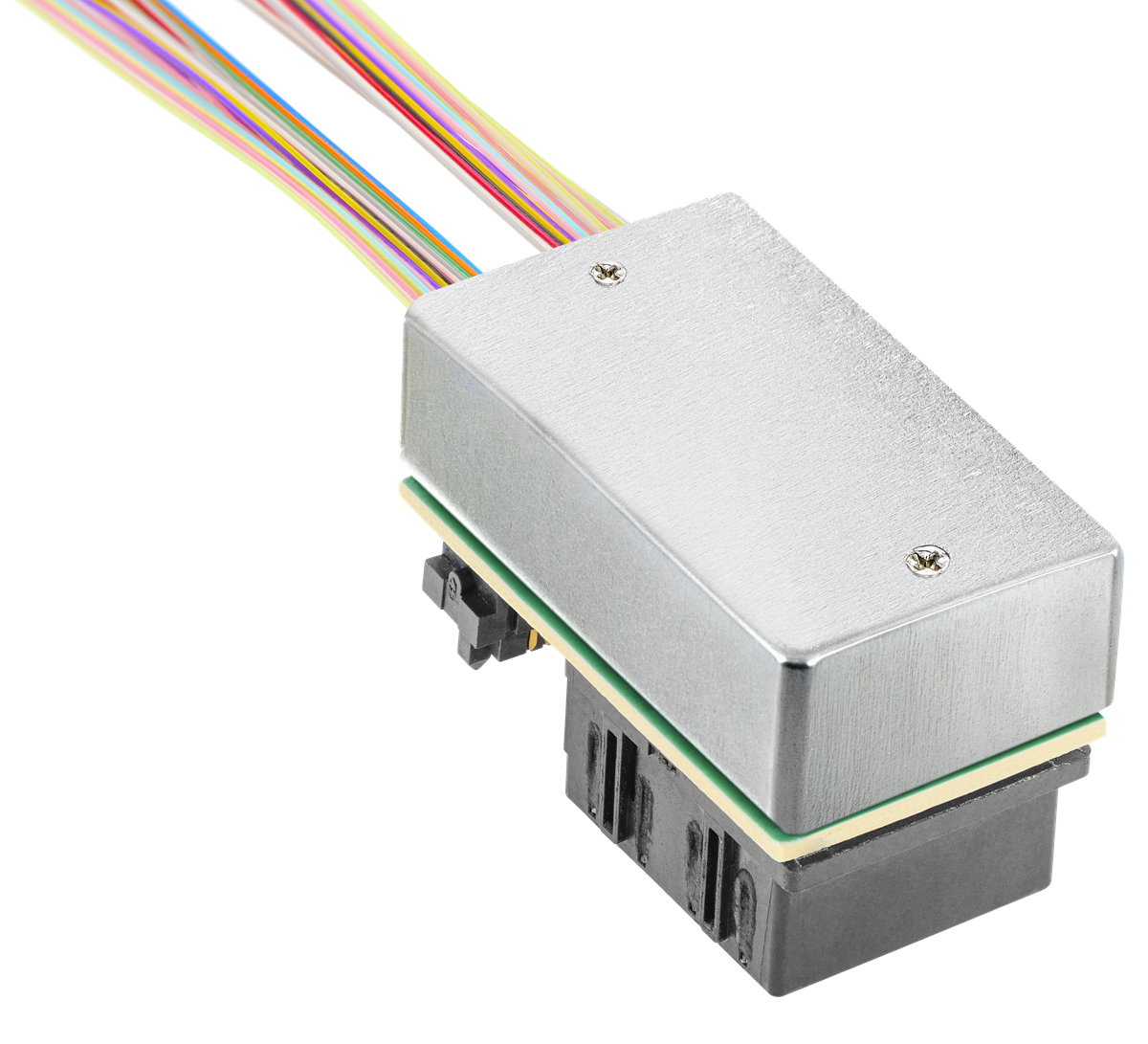

Vesta 200 6.4T CPX Pluggable Co-packaged Optical Engine

Vesta 200 6.4T CPX Pluggable Co-packaged Optical Engine

CPO/NPO will be used extensively in scale up and scale out inside the data center and can also become a highly efficient “client” of the broader transport network, better addressing the original problem of back-to-back grey optics for cases with outboard full spectrum transponders.

AI is driving unprecedented bandwidth growth, but we’re also applying AI to operate networks. How are automation and AI changing network operations?

As networks scale to support AI infrastructure, operational efficiency becomes just as important as raw capacity. It’s not just about building bigger networks—it’s about being able to deploy, optimize, and manage them faster and more reliably.

Software plays a critical role here. At Ciena, we’ve been embedding advanced instrumentation and automation into the network equipment for years. For example, Navigator NCS applications like Automated Deployment Optimizer can now optimize and turn up long haul and subsea wavelengths in hours instead of weeks.

The next step in that evolution is applying AI techniques to network operations. Using rich telemetry and digital-twin validation, operators can automate tasks such as assurance and routing optimization, helping networks scale more efficiently as complexity grows.

If we fast-forward five years, what will define the next era of optical networking innovation?

AI-driven demand is forcing the industry to rethink nearly every dimension of networking, which makes this a particularly exciting time to be working in this field.

We’ll continue to see major advances in electro-optic integration and photonics miniaturization, helping drive further improvements in space and power efficiency. New fiber technologies will also play a role as networks scale to support even greater capacity.

And we’re exploring innovations in areas like thermal management and liquid cooling, which can further improve reliability and efficiency while enabling higher levels of performance.

Ultimately, success will continue to depend on close collaboration with customers and partners to turn new technologies into deployable solutions at scale.