AI is scaling beyond the data center into distributed inference across clouds and the edge. Ciena’s Vini Santos explains how turning that intelligence into real value requires IP networks built for performance, adaptability, and scale.

AI is entering a new phase where the value of intelligence is increasingly realized during inference, when models generate real-time insights, decisions, and interactions. As inference-driven workloads expand and multimodal applications combine text, images, audio, video, and contextual data, the demands placed on networks are changing rapidly.

Traffic patterns are becoming more dynamic, latency requirements are more stringent, and infrastructure is more distributed. For service providers and enterprises planning their next phase of AI deployment, understanding how inference reshapes network requirements will be essential. In this blog, I’ll explore what this shift means for IP network architecture, connectivity, and the opportunities it creates for delivering AI value at scale.

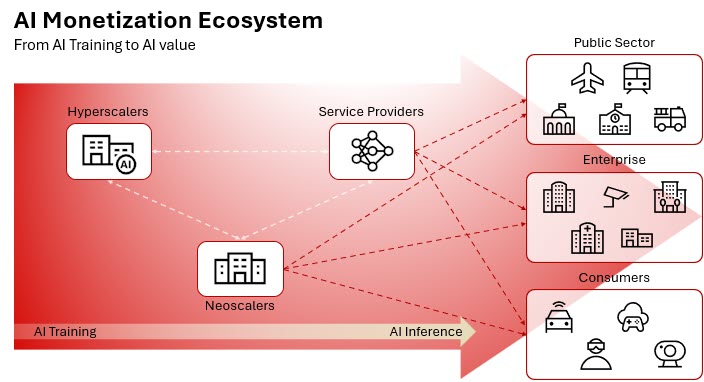

The initial phase of AI investment focused on creating intelligence. Model builders used GPU-powered training clusters, assembled large datasets, and developed increasingly capable models. Progress was measured against various metrics and industry standards. However, monetization has remained limited. Advertising — largely controlled by hyperscalers running the largest AI platforms — remains the dominant revenue model, while subscription-based services are emerging as a growing alternative, signaling a shift toward ongoing, productized AI services that organizations can expand across functions and use cases.

Although these approaches demonstrate AI’s commercial potential, they have yet to fully unlock its economic potential or make AI truly ingrained across enterprise operations, public services, and digital experiences.

The next wave of AI is about delivering intelligence at scale. As AI moves beyond centralized training environments and into real-world applications, value creation increasingly shifts to inference. For service providers, this shift creates an opportunity to deliver new AI-ready connectivity at the edge and managed services closer to users and their data. For enterprises, it enables AI to be embedded directly into core business processes, customer interactions, and decision-making workflows.

This transition turns inference into the next major network stress test. Traffic becomes more bursty, unpredictable, and performance-sensitive, while multimodal interactions combine text, images, audio, video, and contextual data within a single session. For more on how inference traffic is reshaping network requirements, read this blog: AI inference is the next network stress test.

Alongside hyperscalers, neoscalers are helping expand the market with new platforms and sovereign AI environments, all of which depend on simple, high-performance, and highly scalable IP networks to deliver AI value reliably and efficiently.

Why legacy IP networks fall short

Most IP networks were built under different assumptions: steadier traffic patterns, more centralized applications, and operational models driven by human intervention. As inference becomes distributed across clouds, regions, and edge environments, those assumptions no longer hold.

The rapid rise of agentic AI is accelerating this shift. Unlike traditional applications that respond to explicit user requests, agentic systems operate continuously. They monitor signals, initiate tasks, coordinate across models and services, and adapt in real time. As these agents become embedded in enterprise and consumer workflows, network demand becomes increasingly machine-generated, dynamic, and persistent.

Inference traffic now shifts fluidly based on user behavior, application context, and external events, generating bursty, asymmetric flows across domains. Agentic AI adds a continuous layer of autonomous activity on top of human demand, fundamentally altering the concept of network “peak hour.” Utilization is no longer defined by predictable daily cycles, but by always-on digital actors that drive sustained, globally distributed traffic. Networks built for static provisioning struggle to keep pace, forcing operators to overprovision while still risking congestion and an inconsistent user experience.

Operational fragmentation compounds the issue. In many environments, IP and optical remain separate domains with separate control, tooling, and visibility. That separation slows capacity turn-up and rerouting and increases the manual work required to keep performance stable. Multi-cloud AI architectures expose these limits even more clearly because architectures optimized for single-domain control struggle to maintain consistent behavior across private infrastructure, multiple public clouds, and edge locations.

More bandwidth alone does not solve this problem. Without traffic-aware control and integrated coordination across layers, scaling capacity can also scale complexity, cost, and operational risk.

The IP network is needed to scale AI and enable monetization

For AI to be monetized broadly, the network must become more autonomous, not merely larger. It must steer traffic based on intent and real-time conditions, maintain predictable behavior across domains, and simplify operations as scale increases.

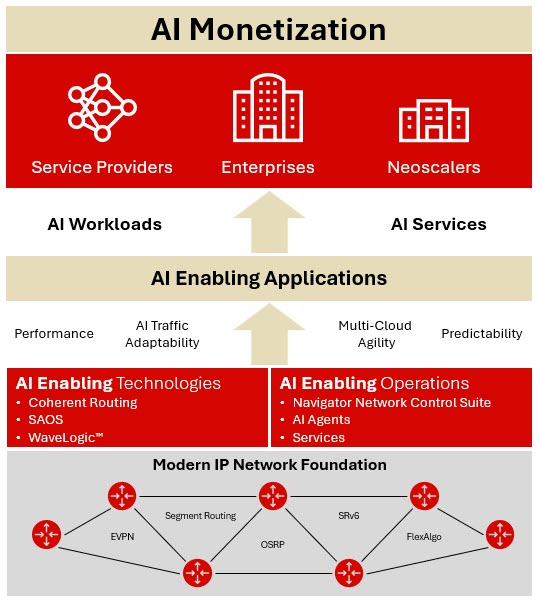

Delivering these capabilities requires a more programmable and secure IP architecture designed for fine-grained traffic control and service isolation. Segment Routing and SRv6 provide the foundation for precise, scalable path control, enabling traffic to be directed dynamically as conditions change. FlexAlgo complements this by enabling multiple path computation algorithms within the same network, allowing traffic classes to follow routes optimized for constraints such as latency, bandwidth, or resiliency. EVPN-based architectures enable a practical multi-cloud delivery by providing scalable Layer 2 and Layer 3 connectivity that supports multi-tenancy and workload mobility without sacrificing consistency. At the same time, technologies such as MACsec help secure AI traffic in motion, providing line-rate encryption between network nodes to protect sensitive data as it moves across distributed AI environments.

Just as important is the convergence of IP and optical layers. AI-driven traffic requires faster bandwidth scaling, rapid rerouting, and end-to-end visibility. When IP and optical operate independently, response times slow and operational burden rises. Convergence enables faster capacity turn-up, improved utilization, and simpler operations while preserving the performance profile that AI workloads demand.

Finally, operating at AI scale requires automation grounded in real-time telemetry. Strong instrumentation reduces reliance on manual intervention and enables predictive optimization as traffic patterns shift.

How Ciena enables AI-ready IP networking

Ciena delivers the modern IP networking foundation required to support AI workloads and unlock AI monetization for service providers, enterprises, and neoscalers. But more than products, Ciena brings together a coordinated set of AI-enabling applications, technologies, and operational capabilities designed to transform the IP network itself.

At the core is a clear principle: AI-driven networks must deliver consistent performance under unpredictable demand, while becoming simpler to operate as they evolve.

Built for AI performance. Powered by AI insight.

Ciena’s coherent routers integrate industry-leading WaveLogic™ coherent technology directly into the IP layer, converging IP and optical networking to deliver massive throughput, faster capacity turn-up, and lower power consumption. This integration provides the performance and visibility required to move AI traffic efficiently across core, metro, and edge environments.

Ciena’s routing platforms run on SAOS, a modern network operating system that supports advanced IP capabilities, including Segment Routing, FlexAlgo, EVPN and, MACsec. These technologies enable traffic-aware, multi-cloud architectures that dynamically adapt to AI-driven demand.

Operational intelligence is delivered through Navigator Network Control Suite, where AI-driven analytics and automation — including AI agents — leverage real-time telemetry across IP and optical layers. These applications enable predictive insight, faster root-cause identification, and closed-loop optimization, shifting network operations from reactive troubleshooting to proactive performance assurance.

Together with Ciena’s services expertise, this integrated portfolio of applications, technologies, and operations transforms the IP network into a high-performance, intelligent platform ready to support the next phase of AI growth.

AI-ready IP networking requirements

AI-ready IP networking requirements

Where AI value is won

The future of AI will be defined not by bigger models alone, but by how effectively intelligence can be delivered at scale.

As AI moves from centralized data centers to distributed, multimodal inference, the IP network becomes the decisive factor in turning innovation into real-world outcomes. Networks built for a more static era cannot keep pace with the variability and performance sensitivity of AI-driven traffic. With the right IP networking foundation, service providers can deliver new AI-powered services, enterprises can embed intelligence across operations, and neoscalers can scale AI platforms efficiently and securely.

In the AI era, value doesn’t stop at compute. It flows across the IP network.