What is Distributed NFV and why do you need it?

It is estimated to take a Service Provider upwards of 6 months to turn up an existing service, and upwards of 18 months to create and rollout a new service. This is the agility gap. It is the direct result of numerous steps required to complete such a task, which is exacerbated by a hardware-based service architecture.

For example, rolling out an existing encryption service to an enterprise is accomplished via an encryptor hardware appliance. Before handing over the encryption service to the enterprise, the Service Provider must perform a multitude of operational tasks. These tasks often include customer relationship management, order fulfillment, inventory and warehouse management, correct configuration retrieval, equipment staging, packing, truck roll delivery, unpacking, physical installation, power connections, network connections, service turn-up and validation, and troubleshooting unforeseen problems that arrive somewhere along this long sequence of manual tasks. And whatever can go wrong along the way, eventually does.

For example, rolling out an existing encryption service to an enterprise is accomplished via an encryptor hardware appliance. Before handing over the encryption service to the enterprise, the Service Provider must perform a multitude of operational tasks. These tasks often include customer relationship management, order fulfillment, inventory and warehouse management, correct configuration retrieval, equipment staging, packing, truck roll delivery, unpacking, physical installation, power connections, network connections, service turn-up and validation, and troubleshooting unforeseen problems that arrive somewhere along this long sequence of manual tasks. And whatever can go wrong along the way, eventually does.

Although the above real-world example is indeed a lengthy and manual-intensive process, there are often even more intermediate tasks not mentioned above, which together, result in the dreaded agility gap. To make things worse, this lengthy and error-prone process is followed for each and every network service that requires a new appliance to be shipped out to a customer. Some services can be combined into a single appliance, such as a firewall and router, but often this isn’t the case and multiple physically distinct appliances are required.

There must be a better way! There is, and it’s called virtualized network services, and is the way of the future.

With Distributed NFV, service providers can now compete on more than simply the lowest price for a given network service, because the agility gap is essentially eliminated, thus enabling a powerful new differentiator – Time-To-Market (TTM).

Network Functions Virtualization (NFV) to the rescue

If we move a network service, such as IP routing, into the software domain and relegate the hosting hardware to readily available and understood x86 servers, this unleashes a boatload of benefits to both the Service Provider and its customers. Once the server is deployed, it’s simply a matter of downloading whatever new Virtual Network Function (VNF) is required onto the server, and then spinning it up and into service. Think about it, network functions can now be downloaded, updated, patched, scaled up/down, deleted, daisy chained together, and even moved between different servers, as required.

Service Providers can then compete on more than simply the lowest price for a given network service, because the agility gap is essentially eliminated, thus enabling a powerful new differentiator – Time-To-Market (TTM). Virtualized services can also leverage massive automation and orchestration of VNFs hosted on lower cost servers that together lower the overall cost of delivering said services, leading to improved profits as well – a win-win situation for everyone, n’est-ce pas?

Where do we physically host these VNFs?

Now that network services have migrated from standalone hardware-based appliances into VNF apps running on servers, where should these servers be physically located? VNFs can run essentially anywhere a host server resides, so is one location better than another? Yes, and let’s discuss where and why.

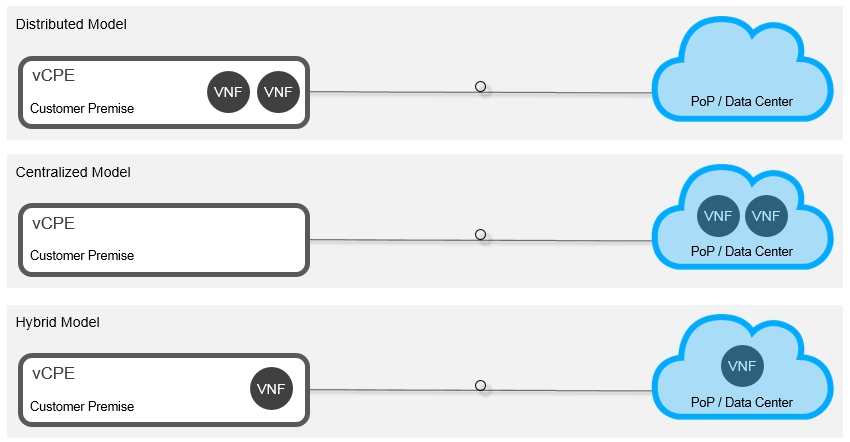

There are three common VNF deployment models; namely, distributed, centralized, and hybrid, as illustrated below. Distributed hosts VNFs at the network edge, typically within a customer premise. Centralized hosts VNFs in one location, such as a data center, where massive economies of web-scale resources can be leveraged. Hybrid is a combination of these two deployment models, where some VNFs are hosted at the network edge and some VNFs hosted in a centralized location.

Distributed NFV (D-NFV) makes sense for a variety of good reasons

Although you can technically host VNFs anywhere you have available server resources -- a flexibility benefit that’s highly coveted by many people -- some VNFs simply make sense to be hosted at the network edge, and often explicitly within a customer premise. There are several reasons for this:

Practicality: certain network functions must be hosted at the customer premise for pragmatic reasons. For example, if an enterprise is serious about security, its data must be encrypted before it leaves their building - (meaning the encryption VNF is best hosted on a server physically located within the customer premise.)

Resilience: some network functions, such as IP-based PBX, must be available even when the WAN connection is down. If this network function is hosted in a distant data center and the network connection to it fails, the enterprise may not be able to make local phone calls, or even calls between cubicles in the same office!

Performance: certain network functions perform better when hosted at the network edge, such as end-to-end Quality of Service (QoS) management or guaranteed Service Level Agreement (SLA) demarcation.

Economics: although some VNFs can be ported into a centralized location via redundant WAN connections, this incurs additional costs that may counter the benefits of hosting the network function in a web-scale data center.

Corporate Policy / Government Regulations: certain security-related network functions must be hosted within a secure customer premise due to corporate policy or local government regulation, such as encryption.

As you can see, some VNFs are better hosted at the network edge, which often means on a customer premise server owned and operated by the Service Provider or by the enterprise itself. Service Providers have the option to deploy an Ethernet Access Device (EAD) equipped with Network Function Virtualization Infrastructure (NFVI), such as the award-winning new 3906mvi Service Virtualization Switch, which includes the necessary x86 server hardware and base software to host VNFs.

This allows Service Providers to initially provide MEF CE2.0 certified Ethernet Business Services (EBS) day one, and then offer value-added network services hosted on the same platform that leverages the EBS to download, update, scale up/down, and manage the various hosted network services. For example, a Service Provider would install an EAD equipped with a server, turn up an EBS, and then download a virtual router, firewall, and encryptor quickly and securely.

Internal Ciena estimates put the market size for D-NFV services to be upwards of US$60 billion when network services such as managed IP/VPN, managed security, WAN optimization, SD-WAN, and SIP trunking are combined into an addressable market.

What do Service Providers actually think about D-NFV?

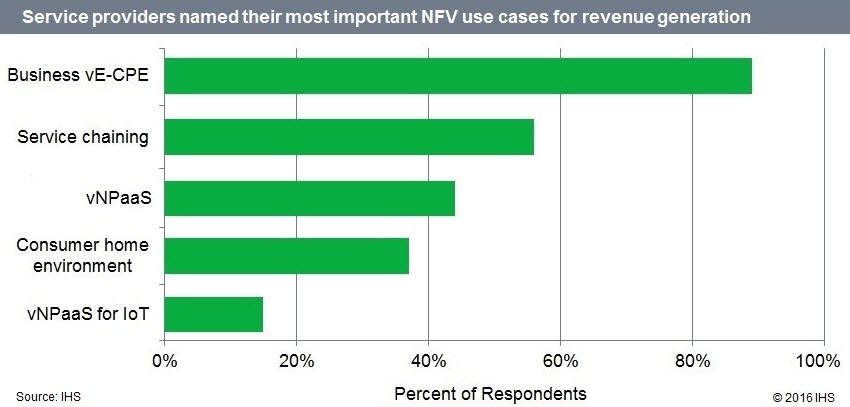

According to the fourth annual SDN/NFV Global Service Provider Survey directed by respected Senior Research Director and Advisor of Carrier Networks, Michael Howard from IHS Markit, Service Providers see great opportunity with hosting VNFs at the network edge. As shown below, almost 90% of respondents named the most important NFV use case for revenue generation to be Business vE-CPE (virtual Enterprise – Customer Premise Equipment), which is essentially the Distributed NFV (D-NFV) use case discussed above. This makes sense when you consider all of the above-mentioned reasons why hosting some VNFs at the network edge is often the right thing to do.

Hosting certain VNFs at the network edge makes sense, but how big is the market for D-NFV services? Well, that depends on how many VNFs you include in the addressable distributed market space, since some VNFs can be hosted essentially anywhere in the end-to-end network. Internal Ciena estimates put the market size to be upwards of US$60B when network services such as managed IP/VPN, managed security, WAN optimization, SD-WAN, and SIP trunking are combined into an addressable market. No wonder Service Providers are focused on NFV-based services, as it makes good sense from an economic and practicality perspective.

The unique personality of D-NFV needs a purpose-built solution

When VNFs are all hosted in a centralized location, such as a large data center, the connections between the various VNFs, orchestrators, and servers is relatively self-contained within the same building and its physical LAN. However, when VNFs are hosted at the network edge, a WAN is included into the mix and this raises challenges related to distance, latency, security, and network performance that are far less of an issue over a LAN. This gives D-NFV a rather unique personality and thus requires a purpose-built solution if broad adoption of virtual services at the edge will ultimately happen.

Enterprises expect virtual network service performance and security to be the same, or better, than hardware appliance-based services, so the challenges of the WAN must be addressed within the D-NFV solution in one way or another. Open source software and tools initially developed for the centralized use case are quite challenged with the D-NFV use case, so some optimizations are necessary. It’s the unique personality of the D-NFV use case that’s explicitly addressed with the new Ciena D-NFVI Software.

Multi-vendor D-NFV in action at the MEF16 event in Baltimore

At MEF16, attendees can experience the “Multi-Vendor Service Orchestration Using Open APIs for Service Activation, Performance Monitoring and NFV” Proof of Concept (PoC), which demonstrates the orchestration of performance management and Carrier Ethernet service activation across an SDN and NFV-enabled multi-vendor WAN. This PoC is conducted by CenturyLink in cooperation with Ciena, our Blue Planet division and RAD to showcase the CenturyLink-led, open-source and multi-vendor initiative to develop APIs and Resource Adapters within the MEF LSO framework to automate the end-to-end delivery of agile, assured, and orchestrated Carrier Ethernet and NFV-based services.

In fact at the MEF Excellence Awards on the last evening of the event, this joint PoC demo between Ciena, CenturyLink and RAD won an award for Best Third Network Proof of Concept.

The Ciena team demonstrates the Proof of Concept demo at MEF16

Competing on time-to-market, and not the lowest price

Service Providers are focused on open ecosystems of vendors to enable greater choice, best-in-breed technology selection, and a more diversified and secure supply chain. A colleague at a recent tradeshow stated “we can get on the openness train, or get run over by it”, and I think he has a point. There’s no stopping the openness juggernaut, and based on the inherent benefits of this new paradigm, I can understand why. As a consumer, I always appreciate greater choice when buying anything, from bread to cars, so why wouldn’t Service Providers want the same thing as well? At Ciena, we’ve been promoting this mindset in our OPn network architecture for several years now, and this mindset is firmly embedded into all of our solution offerings, including our new D-NFV Solution that’s purpose-built for the unique Distributed NFV use case.

The network services may be virtual, but our D-NFV Solution is real. Want to know more? Contact us today.