Multi-Terabit Submarine Cables… Too Big to Fail?

Back in 2010, the Reliability of Global Undersea Cable Communications Infrastructure (ROGUCCI) report found that nearly 100 percent of the world’s intercontinental electronic communications traffic is carried by the undersea cable infrastructure, and there’s no Plan B!

Although this is indeed a sobering fact, given our utter reliance on the global Internet for both consumer and business needs alike, the situation has amplified since 2010 when we take into account the remarkable and ongoing bandwidth consumption growth worldwide. Initial submarine cable information-carrying capacities are increasing by an order of magnitude or more, so one has to address the elephant in the room, or whale in this case… have submarine cables become simply too big to fail? Yes.

The Coherent Effect

Intensity Modulated Direct Detection (IMDD) technology was the optical workhorse for decades and carried digital bits over optical fibers, overland and undersea, by pulsing transmitting lasers on (1) and off (0) to receivers at the far end of the communications link. Although this worked well for decades, voracious bandwidth demands continued to increase unabated. Turning transmitter lasers on and off ever more quickly became difficult, if not impossible, especially as channels were squeezed closer and closer together using DWDM techniques causing linear and nonlinear effects to rear their ugly heads. An optical transmission impasse was soon encountered thus requiring a new and novel way to carry digital information across optical fibers – enter coherent detection technology, and we’ve not looked back for close to a decade.

Coherent-based communications over optical fibers was researched extensively as far back as the late 1970s and early 1980s, primarily to achieve longer reaches. However, the advent of Erbium Doped Fiber Amplifier (EDFA) technology coupled with new Dense Wavelength Division Multiplexing (DWDM) techniques enabled step increases in achievable reach and capacity relegating coherent-based modem technology development to the proverbial backburner, at least for a couple of decades. When technological and commercial limitations of EDFA+DWDM techniques became increasingly apparent and problematic, a new technology was required to increase capacity to maintain pace with worldwide bandwidth growth and to increase reach to optimize end-to-end submarine network designs. The latter is achieved through the elimination of multiple intermediate nodes that previously performed costly and complex optical-electrical-optical (OEO) conversions to achieve required reaches. The invention of coherent modem technology made much of these intermediate nodes simply unnecessary.

Coherent modems addressed capacity and reach objectives, and a whole lot more, especially when other novel technologies were integrated into new Submarine Line Terminating Equipment (SLTE) including Soft Forward Error Correction (FEC), mathematical mitigation of linear impairments via Digital Signal Processing (DSP), and increasingly dense Application Specific Integrated Circuit (ASIC) chipsets.

The perfect storm of these new and innovative technologies allowed SLTE vendors to usher in a sea change of capacity increases on both existing and new submarine cables. Channel speeds soon leaped from 10Gbps to 100Gbps and were squeezed even closer together resulting in submarine cables capable of carrying terabits of data each and every second.

So, are submarine cables now critical infrastructure and simply too big to fail? In most cases, the answer is a resounding YES. A subsea cable fault that interrupts 120Gbps of traffic is a bad thing, but a fault that interrupts tens of terabits of traffic is quite another. How else would we share selfies? Like, seriously? Business traffic would also be interrupted, which could affect a corporation’s financial viability, or even that of an entire country.

Submarine Cable Fault Causes

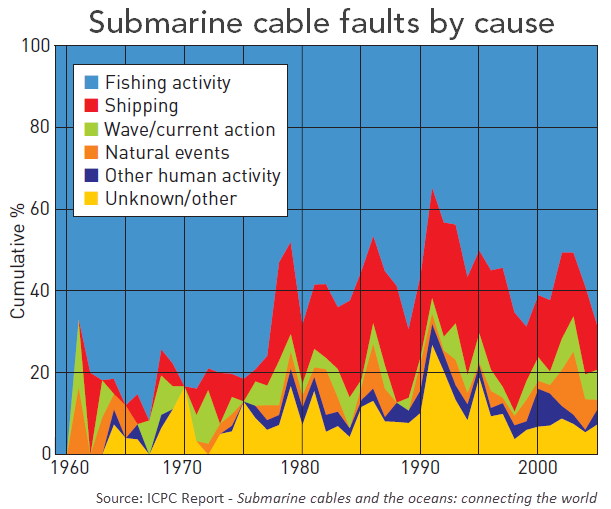

There are numerous causes of submarine cable faults these days, and contrary to popular belief, sharks are simply not one of them. According to the International Cable Protection Committee (ICPC), an organization that provides leadership and guidance on issues related to submarine cable security and reliability, we’re once again our own worst enemy as the primary cause of faults, with ships’ anchoring and fishing activities accounting for 65 to 75 percent of all known cases. Other cable faults are attributed to natural phenomena, such as subsea landslides and ocean currents (< 10 percent), cable component failure (5 percent), and unknown causes (10-20 percent). In the latest ICPC analysis from 2007 to 2014, no recorded cable faults were attributed to our friend the toothy shark – not one.

The International Cable Protection Committee (ICPC)

According to their website, the ICPC is dedicated to the sharing of information for the common interest of all seabed users by representing all those who operate, maintain, and work in every aspect of both the telecom and power submarine cable industry. Although the ICPC does perform a critical role in protecting the hundreds of submarine cables resting on the bottoms of various bodies of water, there are those who simply don’t abide by the recommendations and regulations enforced around the world leading to ongoing damage to submarine cables resulting in multiple manmade traffic outages occurring around the world, and on a fairly regular basis. This doesn’t even take into account the natural causes of submarine cable faults, such as typhoons, subsea earthquakes, and resulting tsunamis, like the incidents near Taiwan in 2006 and 2009, and Japan in 2011, for instance. We won’t even get into the many conspiracies involving the purposeful sabotage of submarine cables.

The bottom line is that even with the best of intentions to protect submarine cables, faults occur nonetheless.

Proactive Mitigation

Assuming that submarine cable faults will undoubtedly occur at some point in time, what can we do to mitigate this inevitable scenario? Well, there are actually many things we can do to ensure cable faults are prevented before they occur, whether the cause is due to man’s activities, the wrath of Mother Nature, or due to network equipment failures. The latter is simply a fact of life, albeit quite a rare one at around only 5 percent according to the ICPC above. This data point is quite impressive when one takes into account the (minimal) 25-year expected lifespan of a wet plant that’s deployed in one of the harshest environments on earth – the cold, dark, and pressurized bottom of the world’s oceans. There are also no recorded failures attributed to the kraken either.

Wet plant vendors have incorporated equipment and component redundancies where it makes sense, such as multiple repeater pumps, along with a highly reliable design, manufacturing, and deployment processes. I personally believe that wet plants are one of the greatest technological achievements in our industry over the past few decades, albeit an often unknown and underappreciated one by the general public. By being able to monitor wet plants in a timely manner, along with SLTE connected at both ends of the submerged cable, proactive notification of impending failures, such as noteworthy changes in bit error rates on a given channel, allow network operators to address failures before they occur. Gathering polled submarine cable network performance data will allow compute engines to implement big data analytics capable of predicting failures with increased accuracy into the future. Equipment failures occur, but beside sudden and abrupt failures with no forewarning, network performance changes often indicate something is awry, well before a fault occurs.

Wet plant vendors have incorporated equipment and component redundancies where it makes sense, such as multiple repeater pumps, along with a highly reliable design, manufacturing, and deployment processes. I personally believe that wet plants are one of the greatest technological achievements in our industry over the past few decades, albeit an often unknown and underappreciated one by the general public. By being able to monitor wet plants in a timely manner, along with SLTE connected at both ends of the submerged cable, proactive notification of impending failures, such as noteworthy changes in bit error rates on a given channel, allow network operators to address failures before they occur. Gathering polled submarine cable network performance data will allow compute engines to implement big data analytics capable of predicting failures with increased accuracy into the future. Equipment failures occur, but beside sudden and abrupt failures with no forewarning, network performance changes often indicate something is awry, well before a fault occurs.

Given that ships’ anchoring and fishing activities account for the majority of submarine cable faults, there must be a way to minimizing these risks. Continuing the ongoing education into the naval industry to prevent from unknowingly engaging in potentially harmful activities nearby submarine cables is one area where the ICPC particularly excels. Another potential way to ensure ships don’t unknowingly engage in risky activities near submarine cables can be achieved by creating restricted zones around submerged critical infrastructure that’s enforced through legal means, although this is difficult to implement, especially due to the intricacies of the high seas.

Communication services can also warn ships approaching submarine cables using an alert-based system that’s integrated into real-time vessel tracking databases. For example, warning messages could be sent to a large container ship cruising near submarine cables so the ship doesn’t unwittingly drop its anchor. The alert could range from a text message warning to a military ship or aircraft sent to “realign” the ship’s actions.

Reactive Mitigation

OK, so a network fault has occurred… now what do we do, besides panic? There are numerous field-proven network protection options available to cable operators today, many of which are deployed throughout the world. For example, intelligent mesh networks are already deployed across the Atlantic Ocean and in the highly vulnerable intra-Asian region along the earthquake-prone “ring of fire” that unfortunately also experiences typhoons and tsunamis! Intelligent mesh networks automatically reroute traffic around cable faults to unused and available capacity on other submarine cables and/or terrestrial networks – and therein lies the problem.

To be able to reroute traffic around submarine cable faults requires unused and available capacity somewhere else in the network, overland and/or undersea, and this has been the case for the past decade. However, as submarine cables are upgraded into the tens of terabits, where will all of this traffic be rerouted when a failure does eventually occur?

If all protection paths are similarly upgraded, then this shouldn’t be an issue, but that’s not always the case for a myriad of technical and economical reasons. Even in capacity protection agreements between submarine cable operators within the same region, the protection path of the partner cable must also scale accordingly to handle the manual traffic handoff. This is one of the primary reasons for deploying new submarine cables along transoceanic corridors served by existing cables – geographic redundancy and diversity.

As submarine and terrestrial networks are seamlessly integrated under intelligent software control, decisions related to the best way to route and reroute traffic can then be achieved, whether the chosen route is overland or undersea. The wet plant should be seen as just another segment within the virtualized underlying network, albeit a rather wet segment. The increased adoption of SDN and big data analytics, coupled with machine learning, allow for increasingly intelligent traffic control even as data patterns continue to change and evolve.

Business Impact

The impact of submarine cable faults and associated outages can be dramatic and even affect the economy of an entire nation that’s connected to the world’s digital economy solely through submarine cables. The impact of cable faults is far greater than simply not being able to post selfies or pictures of your cat. It can disconnect corporations from being able to actively participate in the global digital economy for an unacceptable period of time.

This is why some companies who are replacing onsite compute and storage facilities with cloud services incorporate submarine cable geographic locations into their overall corporate risk assessment, and rightfully so. Fortunately, there are many ways to proactively and reactively mitigate the associated risks, unless one purposely chooses to live on the razor’s edge with regards to network availability, which is very rare these days.

Submarine networks became critical infrastructure many years ago. Given that submarine cables can now carry many terabits of traffic each and every second, the sheer capacities of these cables dictate taking another serious look at how traffic is protected when an inevitable network fault occurs due to man or Mother Nature.

It’s not a matter of “if” a submarine cable fault will occur – it’s a matter of when and where, so prepare now!

Want to learn more about how Ciena GeoMesh can protect your submarine network? Check out this paper.